“If at first you don’t succeed, try, try again.”

Systematic process improvement, as described in several previous posts (Adjusting complex process variables: Drawbead shapes, Virtual Tryout or Process Engineering?,What is passing?, In the rearview mirror, Infinitely adjustable presses, when should we stop adjusting?), is a method of using multiple iterations of simulated forming processes where the design inputs are varied intentionally over a range of potential settings. The intent of this method is to systematically identify what values for the input settings address any forming issues that may arise for given combinations of tool, process, or production settings.

Some of you might wonder if such a process can work in practice. We had the opportunity recently to run a trial of this method, where we asked groups of process engineering professionals to attempt to manually interpret a simulated stamping process, with significant forming issues for visible splitting, challenges with wrinkles, and draw-in beyond acceptable limits. Each engineer was given the chance to define for themselves, based on their experience, the next process combination with which to attempt to address the forming issues.

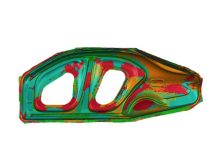

Sample process simulation result as starting point of issue resolution attempts

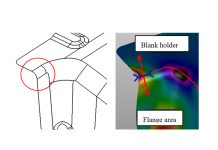

They could choose to edit or define new settings for blank size and shape, drawbead restraints, binder pad pressure, tooling radii, and addendum wall angles. As one might imagine, given rooms full of engineers, a seemingly infinite number of combinations could be rapidly defined. To find out which combination might yield the best result would require the participants in this study to run and evaluate the results before they could come to any conclusions.

This exercise was repeated in 10 locations with different participants. In all, there were over 340 discrete simulations created by the 137 participants, for an average of 2.5 iterations per participant. When all of these simulations were evaluated, it was found that only seven of the 340 simulations resulted in a resolution of all forming issues – and a “working process.”

Forty-three of the 340 discrete simulations resulted in some level of meaningful improvements to the issues. These near misses could eventually have been combined to further refine any of the forming issues, but that would still require time and effort by the engineers to critically evaluate each combination of their inputs into a new iteration – essentially seeding seeding a new “educated guess” and simulating a 341st iteration to find if all the forming issues had been addressed.

As an alternative to creating another batch of guesses to run, the workshop participants were asked to collect the ranges of the forming parameters that they used in order to define a matrix of upper and lower bounds for the defined forming parameters.

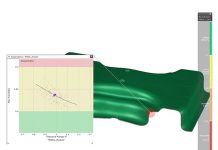

Sample matrix of parameter ranges set up by ten participants in 10 workshops

This matrix of ranges was then used to define a single input set for an AutoForm-Sigma Systematic Process Improvement (SPI) analysis. When defining an SPI run, users can determine for each process parameter a minimum and maximum value; the software automatically combines the ranges of input parameters into a set of simulation “realizations,” each representing a different combination of inputs.

In this way, the entire range of possible sensible process parameters as defined by the user — and all resulting outcomes — can be analyzed at once. In the end, at each workshop location a single AutoForm-Sigma run was performed based on the combined ranges defined by the participants. In eight of the ten workshops, the SPI method achieved precisely what the participants sought – namely a clear definition of which process parameter values addressed all the forming issues.

At two of the workshops, the Sigma set ranges were proven not to address all the issues. It might be tempting to think that this demonstrates a weakness in the approach. But consider the following: At those two locations, it was shown that the solution does not live within the ranges that the users had defined themselves — in other words, it was shown that NO solution existed within the parameters that they thought should work. How many more manual simulation setups and runs would it have taken to come to the same conclusion? To know that a solution does NOT exist (within the range of parameters deemed reasonable) is possibly even more valuable than being told what the solution is.